Power consumption depends primarily on the GPU model and the number of GPUs installed.

As a general reference, an 8-GPU configuration usually falls within the following range:

| Configuration | Estimated Power |

|---|---|

| 8 × RTX 4090 AI | roughly 3–4 kW |

| 8 × RTX 5090 AI | roughly 4–5 kW |

| 8 × RTX 6000 PRO | roughly 3–4 kW |

| 8 × H200 NVL | roughly 5–6 kW |

Actual power usage will vary depending on CPU choice, storage configuration and workload intensity.

For larger deployments it’s important to consider rack-level power capacity and cooling, since multiple GPU servers in the same rack can quickly push total consumption well beyond typical datacenter limits.

If needed, we can help estimate power requirements, cooling considerations and rack density when planning a cluster.

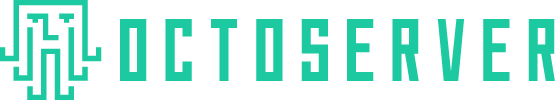

Some platforms also support higher GPU counts. For example, the 4U-E12G-ROME system can be configured with:

-

12 dual-slot GPUs running at PCIe 4.0 x8, or

-

6 larger 3.5-slot GPUs running at PCIe 4.0 x16

This chassis is designed for actively cooled GPUs, allowing higher card density while maintaining stable airflow.

Power requirements scale accordingly depending on the GPU type used.