Maximize AI and ML potential with our latest GPU Servers

Engineered for diverse workloads in data centers of any scale, OCTOSERVER systems offer versatile, resilient, and scalable rack units. Our servers are designed to optimize AI, ML, and high-performance computing, delivering exceptional performance and reliability.

Superior Performance

Our server hardware is designed to offer sufficient cooling for even the highest power consumption GPUs like the RTX 4090 with it’s 450W thermal TDP. Compared to some of the other competing server solution we have oversized the cooling to be future proof and offer the best thermal performance.

Best Value Assurance

By having full vertical integration and manufacturing in-house we can offer the best value enterprise scale server solutions on the market.

Up To 5-Year Warranty

We offer up to a 5-year warranty on our state-of-the-art enterprise AI server hardware, ensuring your technology investment is secure. Count on us for sustained reliability and outstanding support. We are committed to excellence and longevity.

Global On-Site Support

Our global on-site support team guarantees that you receive prompt, expert assistance whenever and wherever you need it. And for our large scale customs we provide on-site initial server deployment and setup as well as “next day on-site” labour and parts service.

Our Products

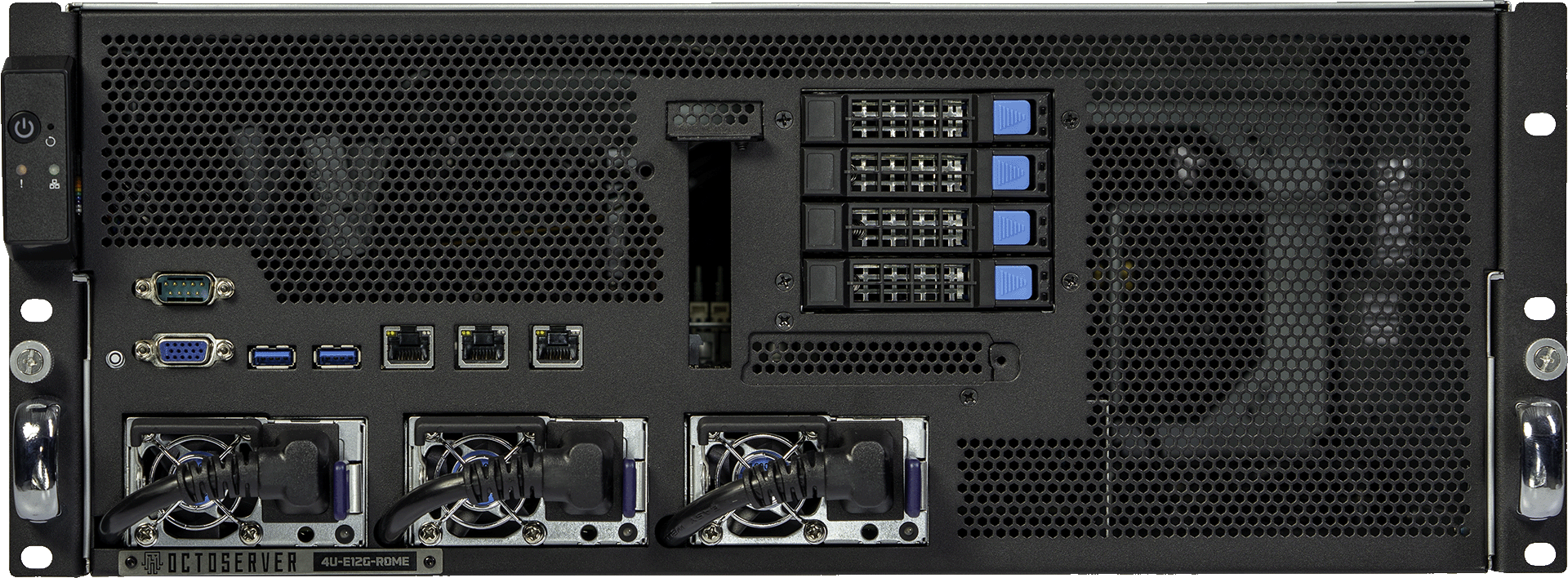

4U-E12G-ROME

#Ai #ML #HPC

The Most Versatile 4U GPU Server Solution

The ultimate HPC/AI server designed to supercharge your computing capabilities. Powered by AMD EPYC™ 7003 processor, this server delivers unparalleled performance, making it the perfect choice for high-performance computing (HPC) and artificial intelligence (AI) applications.

Featuring support for up to 12 GPUs depending on how configured. Supports up to 12x GPU at PCIe 4.0 X8 link or 6x GPU at PCIe 4.0 X16 link. Built to work with even the longest and thickest GPUs.

DESIGNED FOR

RTX5090

/A5000

1X EPYC 7003

64 CORES

MAX MEMORY

4096 GB DDR4

PCI EXPRESS 4.0

16 GT/s

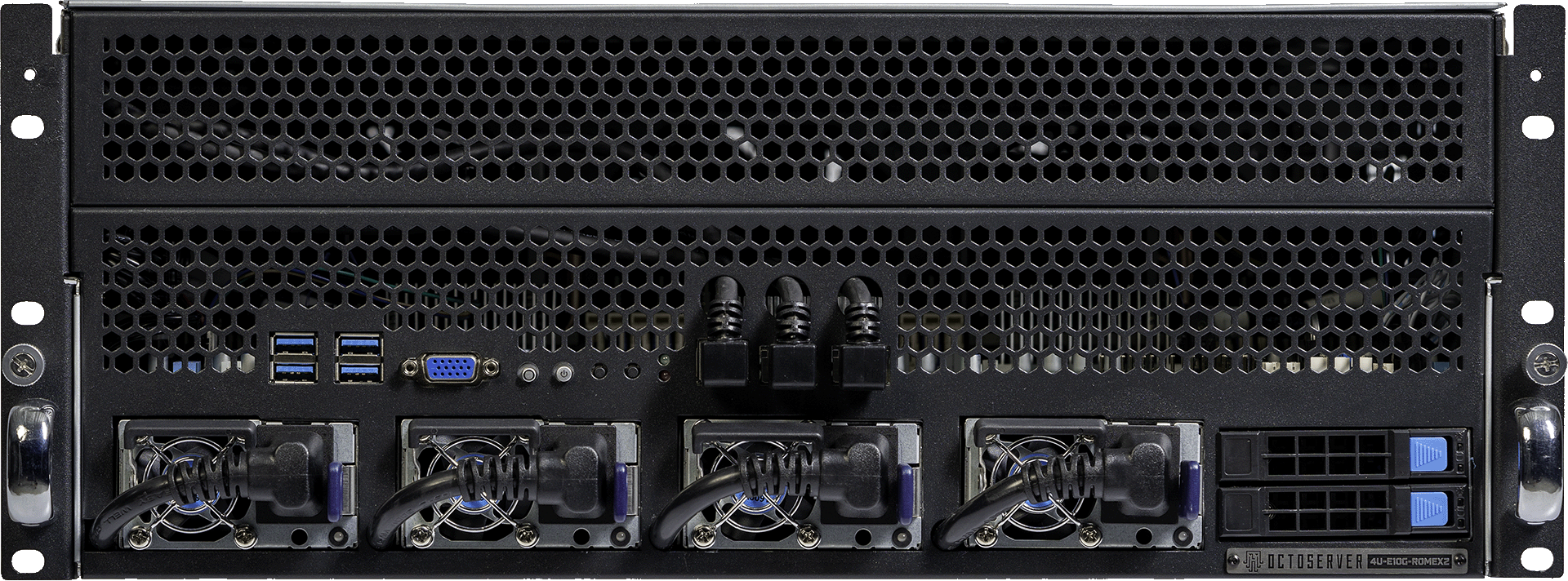

4U-E10G-ROMEX2

#Ai #ML #HPC

Optimized for AI & ML Workloads

Featuring an impressive array of up to 10 Octoserver RTX 4090 AI GPUs, the OCTOSERVER 4U-E10G-ROMEX2 provides exceptional parallel processing power, enabling you to tackle the most demanding computational tasks with ease. Whether you’re involved in scientific research, financial modeling, or advanced machine learning, this server offers the speed and efficiency you need to achieve groundbreaking results.

DESIGNED FOR

RTX5090

UP TO 2X EPYC 7003

128 CORES

MAX MEMORY

8196 GB DDR4

PCI EXPRESS 4.0

16 GT/s

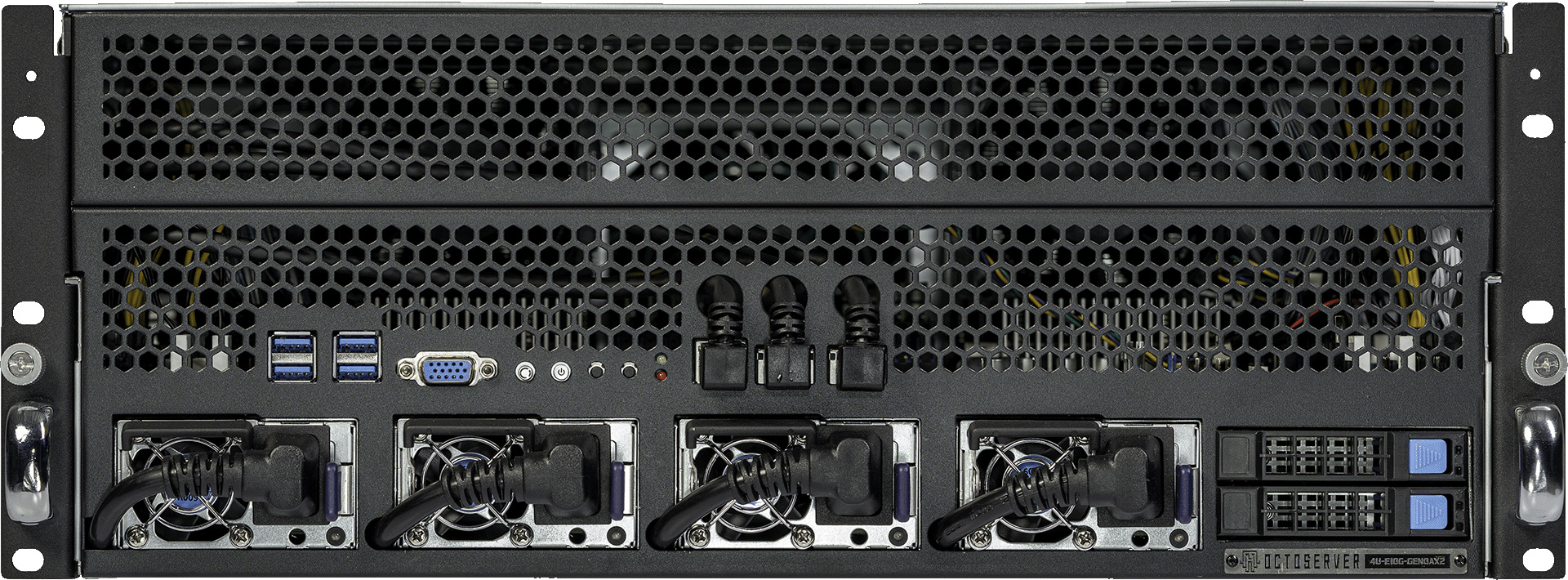

4U-E10G-GENOAX2

#Ai #ML #HPC

PCIe 5.0 GPU Compute Server

The ultimate PCIe 5.0 compatible HPC/AI server designed to supercharge your computing capabilities. Powered by dual AMD EPYC™ 9004 processors, this server delivers unparalleled performance, making it the perfect choice for high-performance computing (HPC) and artificial intelligence (AI) applications.

Fully future proof with PCIe 5.0 compatibility. Whether you’re involved in scientific research, financial modeling, or advanced machine learning, this server offers the speed and efficiency you need to achieve groundbreaking results.

DESIGNED FOR

RTX5090 /H100

UP TO 2X EPYC 9004

256 CORES

MAX MEMORY

12 TB DDR5

PCI EXPRESS 5.0

32 GT/s

About Us

5000+ Satisfied Customers Worldwide

Join 5000+ satisfied customers worldwide who trust us for their GPU server needs. We deliver unparalleled reliability, top-notch performance, and exceptional support to ensure your enterprise thrives in the AI era.

60 000+ GPU Nodes Delivered Worldwide

Our journey began with manufacturing GPU mining hardware, and at our peak, over 60,000 nodes ran on octominer, contributing to around 5% of the total ETH hashrate.

100 000+ ft² Manufacturing and R&D Space

Our state-of-the-art facilities span over 100,000 square feet across global locations. We continuously innovate to ensure our enterprise-grade servers meet the highest standards of performance and reliability for variety of workloads.

Global Operations

ILLINOIS, USA

North America Distribution Warehouse,

North America RMA Center

TALLINN, ESTONIA

Manufacturing, Distribution, Product R & D, Customer Support Center

MANNHEIM, GERMANY

Electrical Engineering, R & D

Commitment to excellence and

innovation

With a focus on innovation and reliability, Octoserver offers advanced GPU server solutions for AI-driven enterprises. Our state-of-the-art technology and dedication to customer satisfaction help businesses harness the full potential of AI.

FAQ – Frequently asked questions

Where are Octoserver GPU servers built and shipped from?

Which shipping options do we provide?

US and Canada

- DDP AIR EXPRESS, TAX INCLUDED (DOOR TO DOOR, 7-12 DAYS)

- DDP EXPRESS SEA, TAX INCLUDED (DOOR TO DOOR, 20-40 DAYS)

EU and the rest of the world

- AIR DDU (10-20 DAYS, DOOR TO DOOR)

How do I configure a GPU server?

Every Octoserver product page includes a built-in configurator.

Instead of emailing for a quote or waiting for someone to calculate a hardware list, you can simply choose the components directly on the page. As options are changed, the configuration and total price update automatically.

The configurator lets you adjust things like:

-

AMD EPYC processor models

-

GPU type and number of GPUs

-

system memory

-

NVMe storage drives

-

networking cards

-

operating system

-

warranty options

Most customers use it to quickly build a system around a specific workload — for example AI training, inference infrastructure, rendering, or research computing.

If you already know the GPUs you need, the rest of the system can usually be configured in just a few minutes.

Which GPUs can be used in Octoserver GPU servers?

Octoserver platforms support a variety of modern AI and data-center GPUs.

Common configurations currently include:

-

NVIDIA RTX 4090 AI

-

NVIDIA RTX 5090 AI

-

NVIDIA RTX 6000 Ada

-

NVIDIA RTX 6000 PRO

-

NVIDIA RTX 6000 PRO Blackwell Server Edition

-

NVIDIA RTX 6000 PRO Blackwell Workstation Edition

-

NVIDIA L40S

-

NVIDIA H200 NVL

These GPUs are typically used in systems running workloads such as:

-

large language model training

-

AI inference services

-

computer vision pipelines

-

scientific simulation

-

GPU-accelerated rendering

Exact GPU availability can change over time depending on supply and configuration.

How many GPUs can an Octoserver system support?

The number of GPUs depends on the server platform and configuration.

Most Octoserver systems are typically deployed with 4 to 8 GPUs per server, which offers the best balance between compute density, PCIe connectivity and system flexibility.

Current platforms include:

-

4U-E10G-ROMEX2 — commonly configured with up to 8 GPUs

-

4U-E10G-GENOAX2 — commonly configured with up to 8 GPUs

-

4U-E12G-ROME — supports up to 12 GPUs

In theory, the ROMEX2 and GENOAX2 platforms can be configured with more than 8 GPUs by using PCIe expansion hardware.

In practice, however, this often isn’t ideal. Modern GPUs consume a large number of PCIe lanes, and once many GPUs are installed there may be very few lanes remaining for other components.

That means things like NVMe storage drives or high-speed network cards can become limited or impossible to add.

For this reason, most real-world deployments keep GPU count per server at eight or fewer, leaving enough PCIe connectivity for storage, networking and other system components.

Larger environments usually scale by adding additional GPU servers rather than pushing extreme GPU counts into a single machine.

How much power does an AI GPU server typically use?

What kinds of workloads are Octoserver GPU servers used for?

These systems are designed for GPU-accelerated computing.

Typical use cases include:

-

training AI models

-

running large language models (LLMs)

-

machine learning research

-

inference infrastructure

-

computer vision applications

-

simulation and scientific computing

-

GPU rendering workloads

The server architecture is optimized for high GPU density, stable PCIe connectivity and consistent airflow, which allows the GPUs to run under sustained load without thermal issues.

How long does it take to receive a configured server?

Delivery time depends on the hardware configuration and GPU availability.

In many cases servers ship within two to four weeks after the order is confirmed.

Large orders or uncommon configurations may require additional preparation time, especially when specific GPUs are involved.

Can the server be shipped directly to a datacenter?

Yes. Many customers have their systems delivered straight to a colocation facility or private datacenter.

Servers are typically shipped ready for installation and can include:

-

rack mounting rails

-

power cables

-

operating system installation if requested

This allows the system to be placed in a rack and brought online quickly after arrival.

How do I request a customized quote for a GPU server?

You can configure each server that we sell online to fit your specific needs. To request a customized quote for a GPU server use our custom server configurator. This tool allows you to specify your exact requirements, including the type and number of GPUs, desired CPU configuration, memory, storage capacity, and any additional features or services you might need. Simply input your specifications into the configurator, and it will generate a detailed quote tailored to your needs.

For further assistance, our support team is available via chat or email to answer any questions and ensure you get the perfect server configuration.

What is the difference between RTX 6000 PRO Blackwell Server Edition and the Workstation Edition?

Both GPUs are based on the same architecture, but they are built for different environments.

The RTX 6000 PRO Blackwell Server Edition is intended for rack servers. It uses passive cooling and relies on the airflow generated by the server chassis.

The RTX 6000 PRO Blackwell Workstation Edition includes an active cooler and is designed for workstation systems where the GPU needs its own fans.

Because Octoserver systems are built for dense rack deployments, we normally use server-grade passive GPUs that match the airflow design of the chassis.

If you’re unsure which option makes sense for your setup, we can help review the workload and recommend the right configuration.

Do you offer white-label or OEM GPU servers?

Yes.

Some partners prefer systems without external branding, especially when they are building their own infrastructure platforms.

In those cases we can provide OEM or white-label GPU servers, either unbranded or configured to match partner requirements.

This is commonly used by resellers, infrastructure providers and companies operating their own compute environments.

Still have questions?

Fill out our contact form, and our team will get back to you promptly.